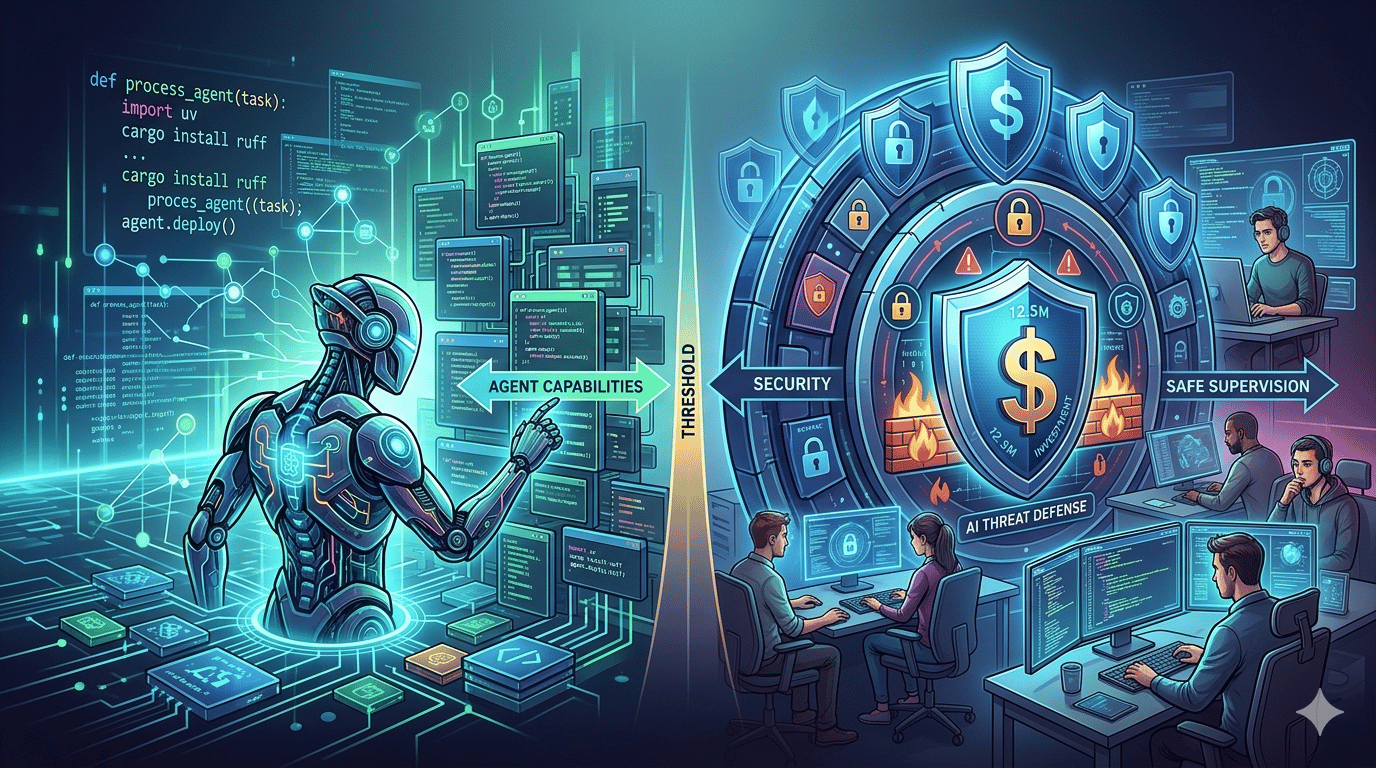

AI coding agents are crossing a threshold this week, and the industry is starting to feel the weight of that. From OpenAI buying the team behind uv and ruff to a $12.5M defensive investment against AI-generated threats, the ecosystem is simultaneously building more capable agents and trying to armor itself against the consequences. The gap between what agents can do and what developers can safely supervise is narrowing fast.

Trend(s) to Watch

AI Tooling for Developers Is in a Precarious State

Experts quoted in a recent DevClass piece describe the current state of AI tools for developers as genuinely dangerous, and they are not being dramatic. The specific concern is agent swarms: tools like Claude Code can now spin up teams of sub-agents that execute tasks in parallel, which sounds efficient until you realize the developer reviewing the output is increasingly just a rubber stamp. The cost dimension is also real. Complex agentic tasks chew through token budgets at a rate that surprises teams running them at scale, and hitting context limits mid-workflow can corrupt state in ways that are hard to debug. If your team is adopting agentic coding tools right now, the question worth asking is not how much faster you are shipping but how much of what ships you actually understand.

A $12.5M Defense Fund for Open Source Against AI-Generated Threats

AWS and a coalition of other companies have pooled $12.5M to protect the open source ecosystem from AI-generated security threats. The framing here is worth unpacking: the concern is not just that AI writes buggy code, but that AI can generate plausible-looking malicious contributions at a volume that overwhelms human reviewers. Maintainers of widely-used projects are already stretched thin. Flooding them with AI-authored pull requests, whether accidentally or deliberately, could degrade the signal-to-noise ratio of open source review to the point of breaking it. The investment is early and the tooling to address this is largely unproven, but the fact that major infrastructure providers are treating this as a funded problem rather than a theoretical one is a signal worth noting.

One thing to try this week

If your team is using any agentic coding tool, spend 30 minutes auditing the last five agent-generated pull requests with fresh eyes. Not to check for bugs, but to ask honestly: could you have caught a subtle logic error or security issue in the time your review actually took? The answer calibrates how much trust you should be extending to the agent right now.

Self Hosted Tool

Building a Locally Hosted Voice Assistant That Actually Works

A detailed Home Assistant community post walks through one developer's multi-month effort to get a locally hosted voice assistant to a state they would describe as reliable and enjoyable, two words that have historically not applied to offline voice setups. The post covers hardware choices, model selection, latency tradeoffs, and the specific configuration that finally made it feel usable. If you have tried local voice assistants before and abandoned them after fighting wake word accuracy or response lag, this writeup is worth reading because it is honest about where the pain points still exist rather than pretending the problem is solved.

Developer Tool

A Teleprompter That Scrolls to Your Voice, Entirely in the Browser

promptme-ai is a browser-based teleprompter that tracks your speech using on-device ASR and scrolls the script to match your pace, no server required. The implementation runs the speech recognition locally via the Web Speech API or a bundled model, which means it works offline and does not send your audio anywhere. It is a narrow tool with an obvious audience: anyone recording technical walkthroughs, conference talks, or demos who wants to stay on script without hiring an operator. The fact that it requires no backend makes it trivially deployable as a static page.

AI Tool(s) of the Week

GPT-5.4 Can Now Navigate Software UIs on Its Own

OpenAI shipped GPT-5.4 on March 5, 2026, with an 83% success rate on a benchmark of real-world job tasks, covering document creation, spreadsheet work, and legal analysis. The more notable addition is native computer-use capability: the model can navigate arbitrary software interfaces via screenshots and commands without requiring custom API integrations. That last part matters because it means GPT-5.4 can operate inside tools that were never designed to be automated. Whether that reads as useful or alarming probably depends on what software sits on your machine.

OpenAI Buys the Team Behind uv, ruff, and ty

OpenAI acquired Astral, the company behind three of the most-adopted Python developer tools of the past two years, to integrate their work into the Codex AI coding agent. uv replaced pip workflows for a significant portion of the Python community, ruff became a default linter for many projects, and ty is a newer type checker from the same team. The acquisition is a bet that a coding agent with tight, low-level control over the Python toolchain will produce more reliable output than one operating at arm's length from the runtime. The non-obvious concern is what this means for the independence of those tools going forward, since they are now assets of a company with a specific commercial interest in their direction.

Open Source Project

Vesper Automates ML Dataset Pipelines via MCP

Vesper is an MCP server designed to let AI agents handle dataset workflows without manual pipeline intervention. It is an early-stage project and the documentation reflects that, so calibrate expectations accordingly. The concept is sound: dataset wrangling is one of the more tedious bottlenecks in ML work, and if an agent can manage ingestion, versioning, and preprocessing autonomously via a standardized protocol, that removes a category of interrupt-driven work from practitioners. Worth watching if you run recurring ML pipelines and are already comfortable with the MCP ecosystem.

Did you know?

The concept of a program that controls another program by simulating user input is older than the modern desktop. In the 1980s, a technique called "keyboard macros" allowed mainframe terminals to replay sequences of keystrokes, effectively automating workflows that had no programmatic API. The idea was largely considered a workaround for systems that refused to expose proper interfaces. What GPT-5.4 is doing with screenshot-based UI navigation is structurally the same workaround, just with a vision model replacing the hardcoded keystroke sequences. The fact that we are still solving this problem the same way, forty years later, says something about how rarely software actually exposes the interfaces its users need.

The week's stories share a single tension: the tooling is ahead of the governance. AI agents can now write code, navigate UIs, manage datasets, and review pull requests, but the frameworks for deciding when to trust that output, and who bears the cost when it is wrong, are still being assembled. The open question is whether the $12.5M defensive fund, the agent cost warnings, and the acquisition of foundational tooling are early signs of the ecosystem self-correcting, or just the first signs of a much harder reckoning ahead.