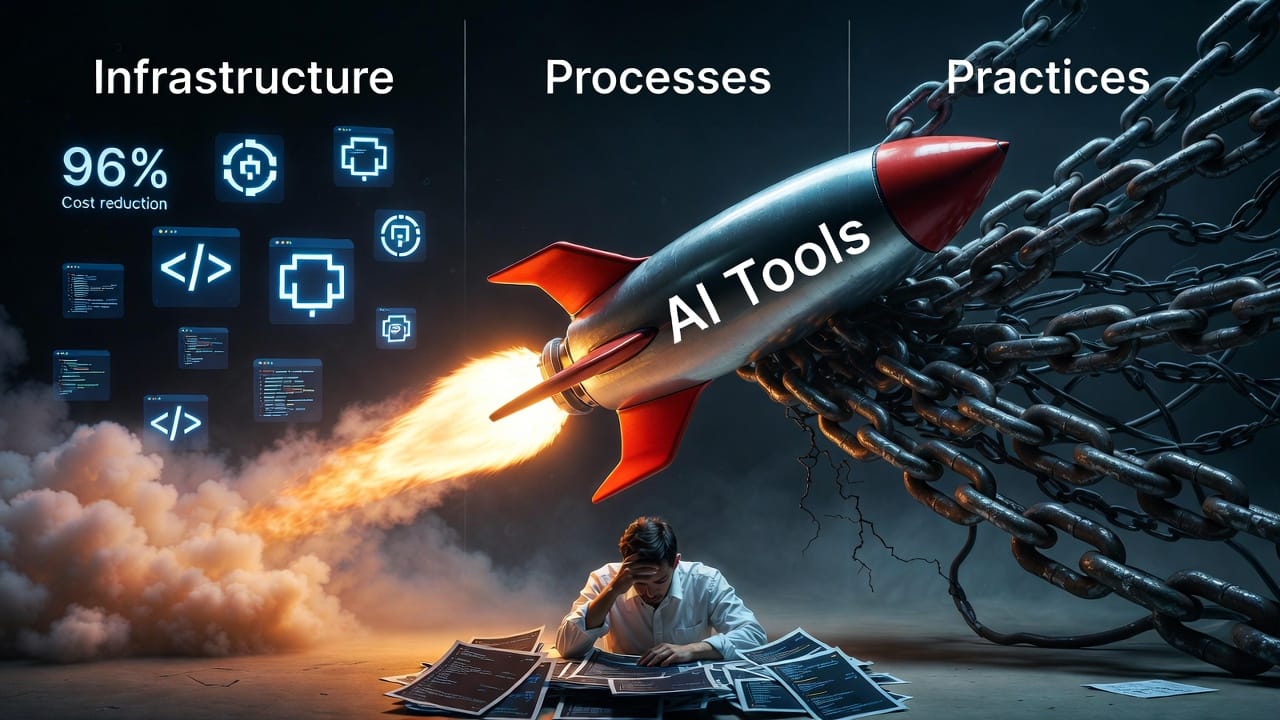

AI tooling is accelerating what developers can ship, but the infrastructure, processes, and practices surrounding that output are struggling to keep up. From a 96% token cost reduction to developer burnout from unreviewed AI-generated code, the gap between capability and maturity is the story. Let's get into it.

Trends to Watch

AI coding speeds dev work and is quietly burning out your team

A new report from Harness lands a finding that should stop any engineering manager mid-scroll: AI tools are producing code faster than teams can safely review, test, and deploy it. The result is more rework, more risk exposure, and in some cases developer burnout from managing the downstream consequences of raw velocity.

Think of it like this, AI hands you a fire hose while your team is still working with buckets. You're moving more water, but the floor is soaked. The core recommendation is modernizing CI/CD pipelines to handle AI-generated output at scale. Easier said than funded, but this report is a useful data point for making the internal case. If you've been trying to justify DevOps investment alongside AI tool adoption, print this one out.

A 40-year-old idea nobody adopted might finally be having its moment

Literate programming, where documentation and code are woven together as a single artifact rather than maintained separately never really took off in mainstream development. Developers wrote code, and documentation was the thing you added afterward (or, let's be honest, didn't).

The argument making the rounds now is that AI agents read your codebase differently than humans do. When an agent is consuming code to take action, the density and structure of explanatory text embedded in that code may matter far more than it ever did for a human reader skimming for variable names. Your inline comments and docstrings aren't just documentation anymore, they're potentially part of the agent's reasoning context.

This is a short read worth passing to anyone thinking seriously about how codebases will be authored and consumed in an agentic workflow. The context around a 40-year-old idea has quietly shifted.

Morgan Stanley predicts a major AI capability jump in H1 2026

Morgan Stanley's forecast centers on compute scaling driving a step-change in AI capability during the first half of this year, with OpenAI's GPT-5.4 already cited as matching human experts on certain benchmarks. The Elon Musk framing around '10x compute doubling intelligence' is speculative and worth treating as such.

The underlying scaling thesis, however, is consistent with what most frontier labs are pursuing. The practical question for engineering and product teams isn't whether to believe the forecast, it's whether your architecture decisions over the next six months assume things will mostly stay the same. If the capability floor shifts significantly mid-year, 'we'll evaluate then' is a strategy with a cost.

One thing to try this week

Audit your last 10 merged PRs that involved AI-generated code. How many had substantive human review vs. a quick skim? The answer might be uncomfortable and useful.

Open Source Project

NVIDIA is now a software company (kind of)

Here's the real story behind NemoClaw, NVIDIA's new open-source enterprise agent platform: a hardware company is now shipping a full software agent stack. That's a meaningful strategic shift worth sitting with.

NemoClaw bundles the NeMo framework, Nemotron models, and NIM microservices into what amounts to a fully-assembled kit for building AI agents at work rather than sourcing every piece yourself. It's designed with enterprise security requirements in mind, which sets it apart from more research-oriented open-source projects. Early conversations with Salesforce, Cisco, and Google suggest the ecosystem is forming quickly, ahead of the formal GTC announcement.

For teams evaluating agentic infrastructure, this is one of the more complete stacks to emerge from a vendor that isn't a pure-play AI company. Whether you trust NVIDIA as a software partner is a separate question.

Developer Tool

One CLI for every API — and a 96% token cost reduction

mcp2cli takes MCP servers and exposes them as standard CLI tools, with a headline claim of 96 to 99 percent fewer tokens than native MCP implementations. That number sounds made-up until you think about what it means in practice: if your team is running 1,000 AI-assisted queries a day at $50 in inference cost, you could be looking at under $2. Token overhead isn't a trivial concern at scale. It hits both your bill and your context window.

The approach also makes MCP-backed APIs accessible to scripts and pipelines that have no awareness of the MCP protocol, which quietly solves a real integration headache. Worth evaluating if you're already running MCP infrastructure and watching your inference bill. The ROI math is pretty simple.

AI Tool of the Week

Anthropic ships Cowork: agents that work inside your actual files

Cowork is a no-code agent built on Claude that operates directly within a user's files and applications on the desktop. The significance here isn't the specific feature set, it's the category shift. Production-ready agents that work on local file systems are a fundamentally different kind of tool than a chatbot or an API integration.

For non-developers on your team, this is the moment where 'AI assistant' stops meaning 'thing you chat with' and starts meaning 'thing that does work on your behalf inside the tools you already use.' For developers, the more interesting question is what the underlying architecture looks like and how it handles permissions and context across applications.

Anthropic is clearly moving from research demonstrations toward shipping things that non-technical users can actually run. That has implications for how the rest of us think about building on top of these models.

Open Source Project

Skir wants to be a simpler Protocol Buffers… for the right team

Skir is a serialization tool positioning itself as a lighter alternative to Protocol Buffers, using a single YAML config file as its schema definition. The comparison to protobuf should be treated cautiously. This is early-stage software without significant production mileage.

That said, the pain point it addresses is real. Schema management across multiple languages adds meaningful friction, and lighter alternatives tend to find adoption quickly when they get the developer experience right. This probably won't replace protobuf at a large engineering org, but for a five-person team drowning in schema files across three languages, it's worth ten minutes of evaluation.

Did you know?

The word "token" in LLMs comes from lexical tokenization in linguistics, the same process linguists use to break spoken language into meaningful units. Most common English words are 1-2 tokens, but "uncharacteristically" is four. And "Buffalo buffalo Buffalo buffalo buffalo buffalo Buffalo buffalo" is a grammatically correct English sentence. Language is wild. AI is weirder.

That is the week. The gap between AI capability and deployment maturity is the story worth watching as the tooling continues to accelerate. More next week.