The gap between AI as a tool and AI as an actor is closing faster than most teams anticipated. This week's stories cluster around a single tension: enterprises are deploying autonomous agents at scale, while researchers and builders are quietly documenting what that actually breaks. Version control, software architecture, and even national operating system policy are all catching shrapnel.

Estimated Read Time: 6 minutes

Trends to Watch

The 88% adoption figure making rounds this week sounds like a conference slide until you realize what it implies operationally

According to a Boston Institute of Analytics roundup, generative AI has reached 88% of organizations in core functions, and the market hit $161 billion in 2026. The more consequential shift is not the number itself but what drove it: agentic frameworks that handle multi-step tasks without a human in the loop. Enterprises are not running pilots anymore. They are running workflows. The infrastructure assumptions underneath those workflows, logging, access control, rollback, are mostly borrowed from a pre-agent world.

Over one weekend, an AI agent compromised a FreeBSD system in four hours

The April 4-5 digest from The Neuron is dense, covering over 150 developments including OpenAI's COO departure ahead of a likely IPO and DeepSeek V4 running on Huawei chips, but the FreeBSD hack is the one that deserves a second read. Four hours from prompt to compromise is not a research demo. It is a benchmark. Security teams who have been treating autonomous agents as a future problem now have a timeline. The hardware angle on DeepSeek V4 matters too: if frontier-class models can run on non-Nvidia silicon, the geopolitical calculus around AI compute shifts considerably.

GitButler raised $17M to build what comes after git

The framing here is deliberate: not a better git, but a replacement for the mental model git represents. Version control was designed around a human writing code in discrete sessions. AI agents produce continuous, high-volume, multi-branch output that does not map cleanly onto commits and pull requests. Whether GitButler's specific approach is the right one is an open question, but the problem they are naming is real. If your codebase is increasingly generated rather than typed, the tooling around it needs to reflect that.

France is migrating government desktops from Windows to Linux

This is not the first government Linux initiative, and most previous ones stalled. What is different now is the stated motivation: reducing dependency on non-European software vendors in a period of geopolitical uncertainty. That framing reframes the risk calculus from "is Linux good enough" to "what is the cost of not controlling your own stack." Whether French bureaucrats will tolerate the transition friction is the actual test, but the policy signal is worth noting for anyone tracking sovereign infrastructure trends.

One thing to try this week

If your team is using AI coding tools on anything larger than a side project, pull up a recent PR and audit it specifically for design decisions, not bugs. The research covered below found that functional correctness is not the main failure mode in AI-generated code. Structural issues are. Spend twenty minutes asking whether the generated code made the right abstraction choices, not just whether it passes tests.

A paper from arxiv looked at large-scale projects generated by AI IDE tools and found that functional correctness, the thing benchmarks measure, is not where the real problems live. The design-level issues, poor separation of concerns, brittle coupling, modules that work but cannot be extended, are where generated code degrades in practice. This matters because most evaluation frameworks for AI coding tools are still built around whether the code runs. Teams shipping production systems need a different rubric.

Self Hosted Tool

LunarGate: a self-hosted LLM gateway in Go

LunarGate is a self-hosted gateway written in Go that sits in front of multiple LLM providers and handles routing, retries, and observability through a single OpenAI-compatible API surface. The value here is straightforward: if you're calling more than one LLM provider in production, you want a single place to handle rate limits, fallbacks, and logging rather than wiring that logic into each service. The OpenAI-compatible interface means you can swap it in without changing client code. Early project, but the architecture is sound and Go is a reasonable choice for a latency-sensitive proxy layer.

Developer Tools

Instant 1.0: a backend built for the AI-coded app era

Instant 1.0 positions itself as a backend platform designed from the ground up for applications where much of the code is AI-generated. The pitch is that existing backend primitives assume a human wrote the client code and will maintain it, while AI-generated apps have different characteristics: they're iterated faster, the schema changes more often, and the developer may not have a deep mental model of what was generated. Whether that framing holds up under real workloads is worth testing, but it's a more precise value proposition than most "backend as a service" products manage.

Craft: Cargo-style builds for C and C++

Craft brings a TOML-based project configuration model to C and C++ development, modeled closely on cargo. This is an early tool from a solo developer, so treat it as promising rather than production-ready. The problem it's targeting is real though: C++ project setup with CMake or raw Makefiles has a learning curve that discourages experimentation, and Rust's developer experience around builds has raised expectations. A lighter-weight on-ramp for C/C++ projects has an obvious audience in embedded and systems developers who know the language but find the toolchain setup tedious.

AI Tools of the Week

Choosing a frontier model in 2026 is genuinely hard, and most comparisons are either outdated or vendor-funded

The Gurusup model comparison guide attempts a structured head-to-head across the four main frontier contenders. It is worth treating as a starting point rather than a verdict, since model capabilities shift fast enough that any static comparison has a shelf life measured in weeks. That said, use-case-driven selection, meaning picking based on whether you need code generation, reasoning, long context, or cost efficiency, is more durable than chasing benchmark scores.

Claude Managed Agents ships a pre-built framework on Anthropic-controlled infrastructure

Anthropics move here is to lower the activation energy for deploying agents by handling the infrastructure layer. You get the orchestration, the retry logic, and the managed runtime without standing it up yourself. The tradeoff is the one you always make with managed services: less control, faster start. For teams that want to ship an agent-based product without becoming an LLM infrastructure team, this is a reasonable on-ramp. For teams with compliance requirements or latency constraints, the self-hosted path is still going to be necessary.

Open Source Projects

SmolVM: a sandbox for coding and computer-use agents

SmolVM is an open-source sandbox environment designed to let AI agents run code, interact with desktop interfaces, and manage state without touching the host system. Early-stage and freshly posted to GitHub, so calibrate expectations accordingly, but the problem it's addressing is real: most agent frameworks bolt tool-use onto a language model without any isolation layer, which makes production deployments risky. A proper sandbox with state management is a missing piece in a lot of agentic stacks right now.

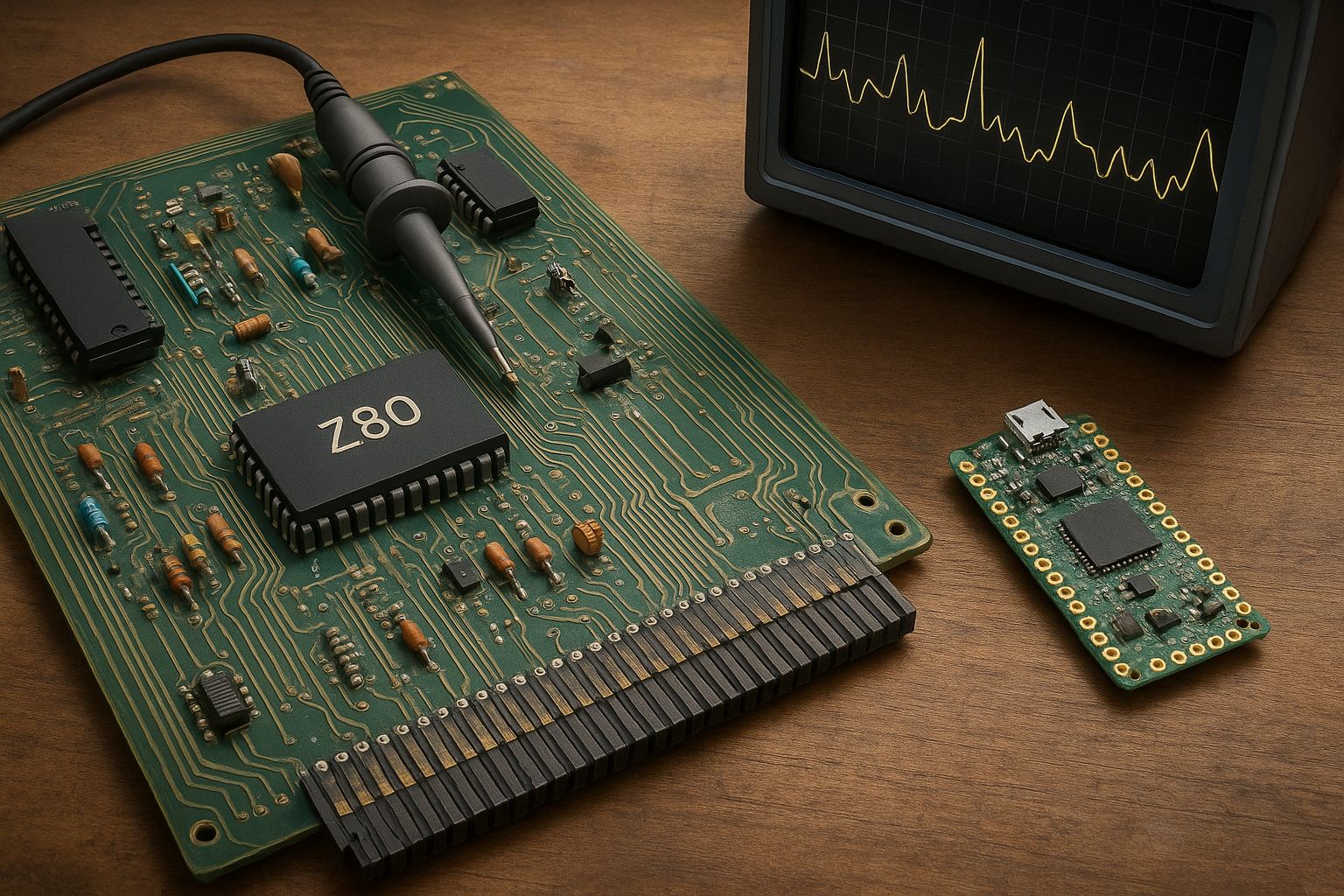

PicoZ80: a drop-in Z80 replacement using modern hardware

PicoZ80 replaces the original Zilog Z80 chip with a modern microcontroller that emulates it at the pin level, letting retro hardware run without sourcing increasingly scarce 40-year-old silicon. This is firmly in hobbyist territory, but it's a clean piece of engineering: the Z80 was used in everything from the ZX Spectrum to early CP/M machines, and keeping that hardware functional has genuine archival value. The interesting technical question is how accurately pin-level emulation can replicate the original timing behavior that some software depended on.

Did you know?

The concept of a software agent, a program that perceives its environment and acts autonomously toward a goal, predates the web. The term was formalized in AI research in the late 1980s, and by 1994, researchers at MIT were already publishing on software agents capable of delegating tasks across networks. The 2026 version of agentic AI is architecturally different, built on large language models rather than symbolic planners, but the core design question has not changed in thirty years: how do you specify what you want a system to do without specifying exactly how to do it? Thirty years of research could not fully answer it. We are finding out whether scale can.

Wrapping Things Up

The through-line this week is not adoption or market size but accountability: who is responsible when an agent modifies code, breaches a system, or makes a structural decision a human would have reviewed. The tooling around agents, sandboxes, gateways, new version control models, is a bet that we can build the guardrails fast enough to keep pace with the deployment curve.